The AI Radiology Blog – Chapter 1: What is AI

Published by Aina Tersol on May 02, 2022

Through a series of blog-format entries, this work aims to provide a comprehensive review of Artificial Intelligence (AI), as applied to the field of Veterinary Medicine, building a bridge between clinical practice and technology for the purposes of improving patient care.

What is AI?

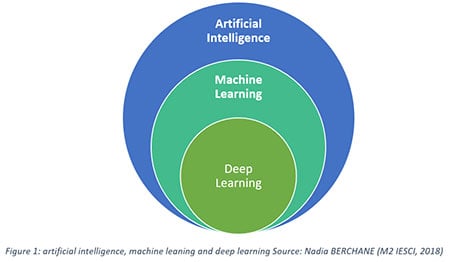

Artificial Intelligence (AI), Machine Learning (ML), and Deep Learning (DL) are all closely related concepts that, while sometimes used interchangeably (particularly in the corporate environment), refer to different things.

Broadly speaking, the definition of AI is an algorithm that mimics human intelligence, but in practice, its limits vary depending on the source.* Some consider AI basic logic (such as “if-else” statements) that guides the behavior of an algorithm in specific situations. In contrast, others limit AI to the autonomous resolution of problems and the ability to learn (i.e., models with the capability of auto-improvement).

At SignalPET, and for the purposes of this blog, AI is understood as an algorithm that can simulate human behavior and has the ability to learn.

Machine Learning (ML), on the other hand, is an application of AI where the learning process is autonomous. In other words, the model improves with experience. The ML model infers information from the data which allows it to make predictions on unseen instances.

Lastly, Deep Learning (DL), is a type of machine learning based on neural networks, essentially, a set of nodes organized in layers that allow the algorithm to improve itself (in a way, learning from data processing).

Deep Learning vs Machine Learning

Simplistically, Deep Learning (DL) can be seen as a subfield of Machine Learning (ML), and Machine Learning (ML) as a subset of Artificial Intelligence (AI).

Simplistically, Deep Learning (DL) can be seen as a subfield of Machine Learning (ML), and Machine Learning (ML) as a subset of Artificial Intelligence (AI).

Let’s use the common (and fitting) example of “cat vs. dog.” This would be considered a basic “object recognition” problem. Most humans have learned over time what a cat and a dog look like, and the association of a particular feature, or a combination of features, leads one to identify the animal as one or the other. Similarly, in the AI world, that association or “workflow” could be represented by an Input (for example “an image”), a Model (for example ‘black box’); and an Output (a label such as ‘’cat” or ‘’dog”). The user would feed the image into the ‘black box,’ which would return the object’s name (the label).

In ML, the features need to be handcrafted, for example, the particular region of the image to look at such as the shape of the ears, or the texture of the fur, etc. As such, Machine Learning requires expert labeling and an accurate definition of those specific features. With Deep Learning “DL”, on the other hand, the manual feature extraction process is very much reduced. This allows for larger datasets and less structured data inputs, effectively making the process much more scalable.

For example, if one were thinking of a DL model in the “Cat vs Dog” workflow above, a picture of a cat might be fed into the model (black-box) which would then output the desired label, in this case, ‘cat’. There are a lot of details in how the deep learning black-box works (and even more in how it is built); however, it is essentially an optimization issue. During training, the model makes a prediction for every input; and upon evaluation, correct predictions reinforce the model, while incorrect ones trigger a change. This process is repeated autonomously and iteratively, usually until the model stabilizes.

While differentiating between a dog and a cat is a task that most of us learn from an early age, differentiating between specific breeds from an image or species based on a radiograph can get more complicated. Similarly, there are ‘easier’ and ‘harder’ DL problems. One way of interpreting this would be to think about DL models as ‘human kids’; what they learn is dependent upon and therefore limited to the knowledge and capacity of their teacher. As such DL models tend to excel in tasks that humans are able to accurately describe and define, but fail when facing more complex issues that are beyond the understanding of their individual human teacher/input source. In medical imaging in particular, the models present challenges related to labeling accuracy and consistency due to the inherent interpretable (therefore subjective), nature of diagnostics. This is why the use of AI, and in particular DL, is such a powerful tool for helping to interpret imaging results.

DL model performance can be analyzed and visualized in many ways, ranging from traditional dice scores and accuracy metrics to more innovative approaches such as graphical representations. The latter, graphical representations, are particularly easy to interpret, as they show the relationships of the data in a spatial plane. Essentially, it leverages a common tool in data science called “dimensionality reduction”, in order to construct an embedding which represents the data in a lower dimension, thus allowing it to visualize its structure and distribution at various scale and sizing.

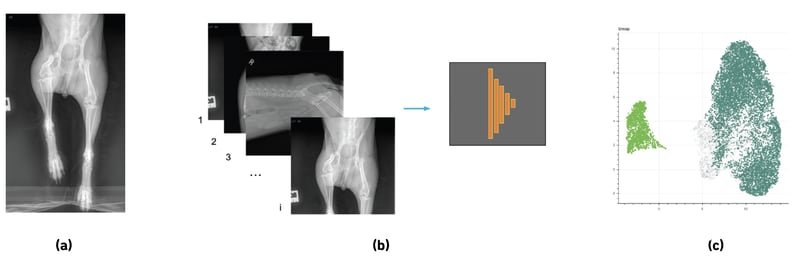

An example of an AI process is illustrated in Figure 2:

Figure 2: (a) X-Ray presenting right hip luxation. (b) Deep Learning Network Example. Where images are fed and the label vector x is generated, classifying each image as ‘normal’ or ‘pathological’ (c) Parametric Embedding example of the outcome of the network, showing two clear spatial clusters for ‘normal’ and ‘pathological’ images.

AI in the Veterinary World

The ultimate role of AI in the veterinary world is still being shaped; however, at SignalPET, we believe the objective nature of mathematical models, as well as the possibility of rapidly interpreting large amounts of data, makes AI an extremely useful and beneficial tool for any clinical practice. With SignalPET’s advanced AI solutions, veterinary radiology experts and clinicians can now leverage the speed and accuracy of AI as a decision support tool in order to provide more confident and standardized diagnostics, resulting in better care for their patients.

At SignalPET, we leverage the advantages and possibilities of deep learning to provide more accurate and standardized diagnostics, with an aim to aid veterinary practices in providing better care, and making more informed and confident decisions.

Written by: Aina Tersol

In collaboration with Lior Kuyer, Dr. Emily Angell Crosley

* Sources for interpretations of AI:

Alan Turing (Computing Machinery and Intelligence, 1950)

Stuart Russell and Peter Norvig (2004 what is artificial intelligence)

John McCarthy (Artificial Intelligence: A Modern Approach)

Geoff Hinton (2016 Machine Learning and Market for Intelligence Conference in Toronto)